Shelf Blog: AI Deployment

Get weekly updates on best practices, trends, and news surrounding knowledge management, AI and customer service innovation.

The business world has changed dramatically with the rapid development of artificial intelligence. Tasks that used to take people hours to complete can now be handled by AI in just a few minutes. But even that has its limits, and in some situations, even a single agent is no longer enough....

Enterprise AI Platform in 2026 is not just another AI tool. It is a unified, large-scale infrastructure environment in which AI agents can analyze data, make decisions, and interact with one another with virtually no human intervention. It is not an LLM API; it is something much bigger and more...

Agentic process automation, or APA, is a necessary and entirely logical evolution of RPA. The key difference between APA and RPA is the ability of AI agents to perform their tasks autonomously. This means they make decisions and adapt within business processes WITHOUT relying on scripts....

AI models don’t think—they predict. When they generate false or misleading outputs, it’s because they’re filling in gaps based on patterns in their training data. This phenomenon, known as AI hallucination, leads to responses that sound correct but have no basis in reality. For AI leaders...

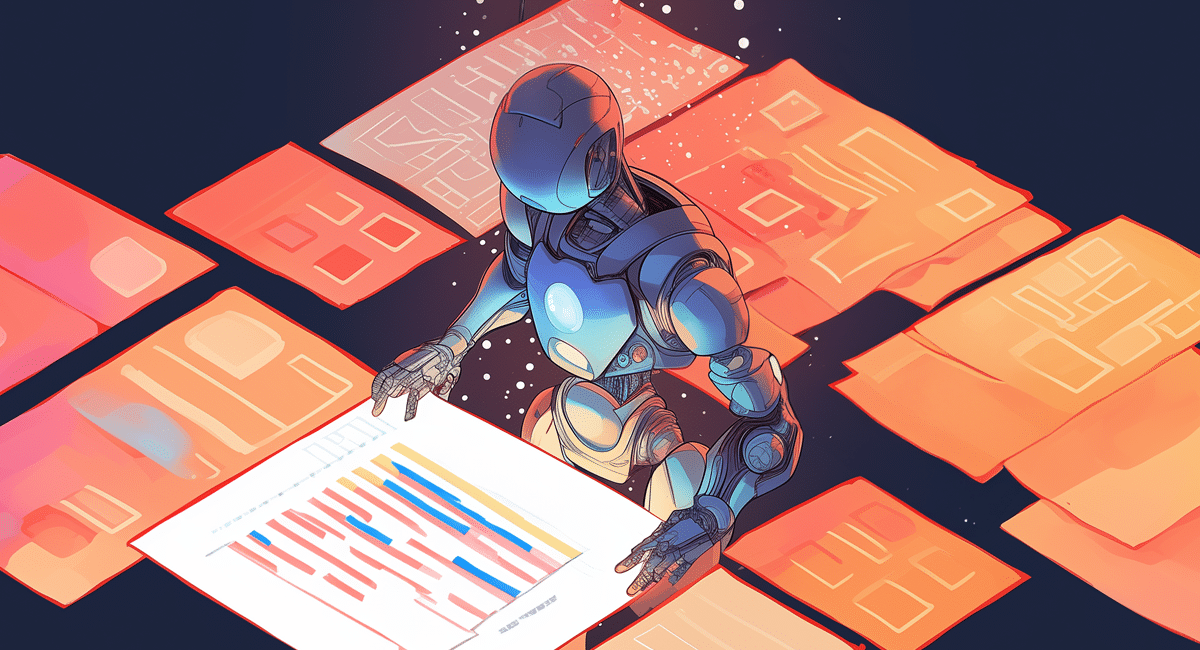

Making sure your data is ready for AI agents is critical for the success of your projects. As an AI leader or tech strategist, you understand the importance of data accuracy and integrity in AI models. Well-prepared data leads to more reliable outcomes, higher customer satisfaction, and better...

The world’s leading AI companies—OpenAI, Google, and Microsoft—are redefining what’s possible with enterprise AI. If your business is relying on a single-agent AI setup, you might be missing out on its full potential. Multi-agent AI systems take things to the next level. Unlike...