Machine learning (ML) offers powerful tools for predictive analytics, automation, and decision-making. By analyzing vast amounts of data, ML models can uncover unique patterns and insights. This can drive efficiency, innovation, and competitive advantage for your organization. But, the true value of ML lies not just in developing sophisticated models but in successful ML model deployment into production environments. ML model deployment is the critical phase where models move from theoretical constructs to practical tools that impact real-world business processes.

In this article, we explore the key aspects of deploying ML models, including system architecture, deployment methods, and the challenges you might face. By understanding these elements, you can ensure that your ML model deployments operate efficiently and effectively.

What is ML Model Deployment?

Machine learning (ML) model deployment refers to the process of making a trained ML model available for use in a production environment. This involves integrating the model into an existing system or application so that it can start making predictions based on new data.

Deployment ensures that the model can interact with other components, such as databases and user interfaces, to deliver real-time insights and automated decisions.

Developing vs Deploying ML Models

Development Phase: This phase focuses on designing, training, and validating the ML model. Data scientists and engineers experiment with different algorithms, tune hyperparameters, and ensure the model achieves the desired accuracy and performance on test data. The primary goal here is to create a model that performs well on historical data.

Deployment Phase: Once the model is trained and validated, it moves to the deployment phase. This involves integrating the model into a live production environment where it can process real-time data and provide actionable outputs. The emphasis shifts from experimentation to ensuring the model is reliable, scalable, and maintainability in a real-world setting.

Why Model Deployment is a Critical Phase in the ML Lifecycle

Deployment is where the theoretical value of the ML model translates into practical benefits for the organization. It’s the stage where the model begins to contribute to decision-making processes, automate tasks, and enhance overall efficiency.

System Architecture for ML Model Deployment

Deploying a machine learning model requires a robust system architecture to ensure seamless integration, scalability, and maintainability.

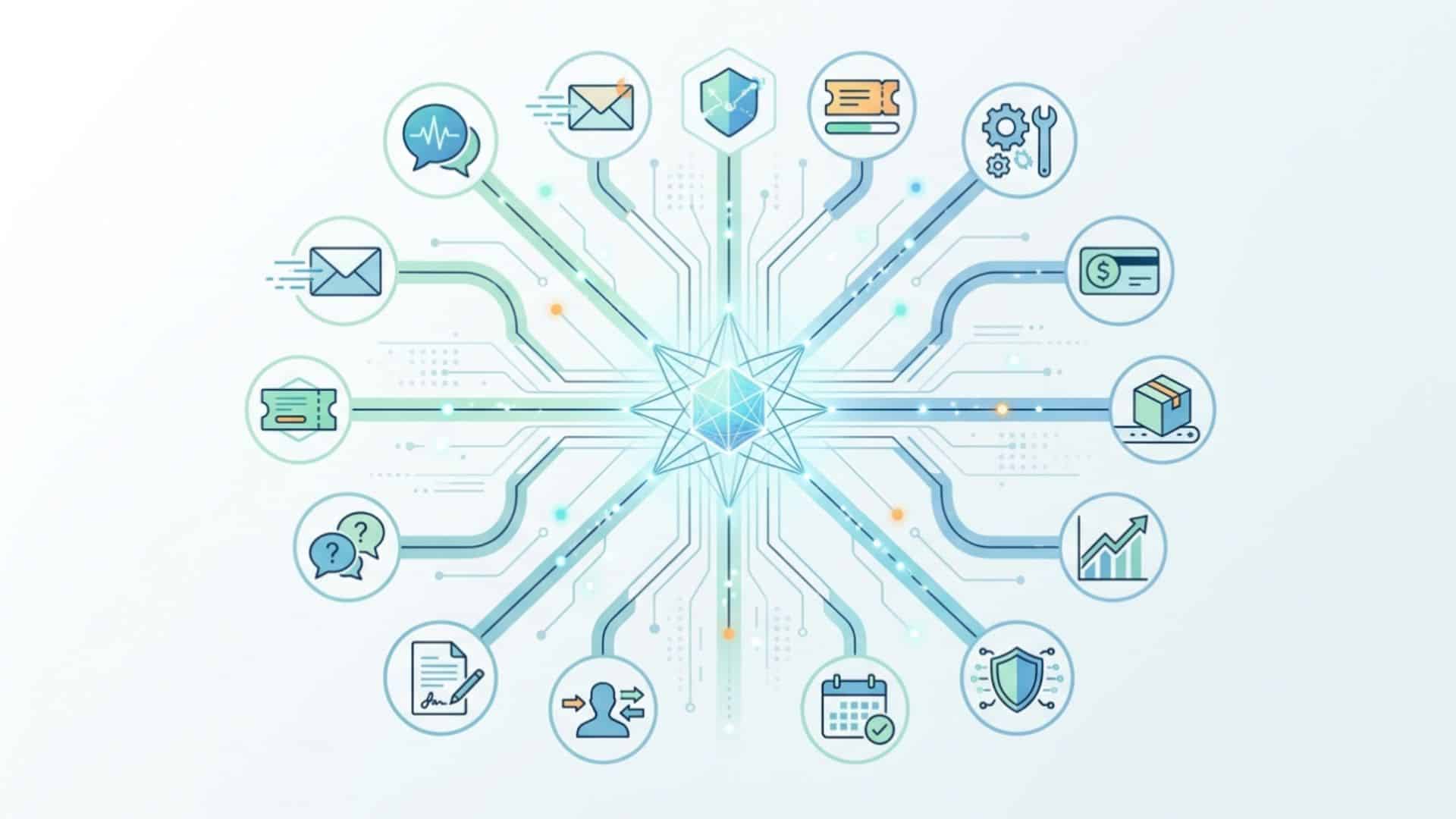

At a high level, a machine learning system can be divided into four main parts: the data layer, feature layer, scoring layer, and evaluation layer. Each layer plays a crucial role in the deployment process and the overall functionality of the ML system.

1. Data Layer

The data layer provides access to all the data sources that the model requires. This includes raw data, pre-processed data, and any additional data sources needed for generating features. This ensures the model has access to up-to-date and relevant data, enabling it to make accurate predictions.

Components of the Data Layer

- Data Storage: Databases, data lakes, or cloud storage solutions where data is stored and managed.

- Data Ingestion: Pipelines and tools that facilitate the collection, extraction, and loading of data from various sources.

2. Feature Layer

The feature layer is responsible for generating feature data in a transparent, scalable, and usable manner. Features are the inputs used by the ML model to make predictions. This layer ensures that features are generated consistently and efficiently, enabling the model to perform accurately and reliably.

Components of the Feature Layer

- Feature Engineering: Processes and algorithms used to transform raw data into meaningful features.

- Feature Storage: Databases or storage systems where features are stored and accessed.

3. Scoring Layer

The scoring layer transforms features into predictions. This is where the trained ML model processes the input features and generates outputs. Real-time or batch predictions are produced based on the input features, which enables automated decision-making and insights.

Components of the Scoring Layer

- Model Serving: Infrastructure and tools that host the ML model and handle prediction requests.

- Prediction APIs: Interfaces that allow other systems to interact with the model and retrieve predictions.

- Common Tools: Scikit-Learn is most commonly used and is the industry standard for scoring.

4. Evaluation Layer

The evaluation layer checks the equivalence of two models and monitors production models. It is used to compare how closely the training predictions match the predictions on live traffic. This ensures that the model remains accurate and reliable over time and detects any degradation in performance.

Components of the Feature Layer

- Model Monitoring: Tools and processes that track model performance in real-time.

- Model Comparison: Techniques to compare the performance of different models or versions of the same model.

- Metrics and Logging: Systems that log predictions, track metrics, and provide alerts for anomalies.

5 ML Model Deployment Methods to Know

Choosing the right deployment method for your ML model depends on your specific needs and constraints. One-off, batch, and real-time deployments offer general solutions for various scenarios, while streaming and edge deployments cater to specialized use cases requiring continuous processing and localized predictions.

1. One-Off Deployment

This involves deploying the model to generate predictions on a specific dataset at a particular point in time. This method is often used for initial testing or when predictions are needed for a static dataset. It’s simple and requires minimal infrastructure, but is not suitable for ongoing or real-time predictions.

2. Batch Deployment

Batch deployment processes a large set of data at regular intervals. The model is applied to batches of data to generate predictions, which are then used for analysis or decision-making.

This method is efficient for handling large volumes of data and is easier to manage and monitor. However, there is a latency between data collection and prediction, making it unsuitable for real-time applications.

3. Real-Time Deployment

Real-time deployment involves making predictions instantly as new data arrives. This requires the model to be integrated into a system that can handle real-time data input and output. It is ideal for applications like live customer support chatbots, real-time recommendations, and autonomous vehicles.

The main advantage is immediate predictions and actions, which enhances the user experience and engagement. However, it requires robust infrastructure and low-latency systems, so it’s more complex to implement and maintain.

4. Streaming Deployment

Streaming deployment is designed for continuous data streams, processing data as it flows in and providing near-instantaneous predictions. This method is used in financial market analysis, real-time monitoring, and IoT sensor data processing. The downside is that it requires high infrastructure and maintenance costs and specialized tools and technologies.

5. Edge Deployment

Edge deployment involves deploying the model on edge devices like smartphones, IoT devices, or embedded systems. The model runs locally on the device, reducing the need for constant connectivity to a central server. This method is used for predictive maintenance, personalized mobile app experiences, and autonomous drones.

By processing data locally on the edge device, the need to transmit sensitive data to external servers or the cloud is minimized. This reduces the attack surface and the potential points of vulnerability. Even if an attacker gains access to the communication channel, they would only have access to the limited data being transmitted, rather than the entire dataset stored on a central server.

Edge deployment also allows organizations to maintain better control over their data and ensure compliance with data protection regulations, such as GDPR or HIPAA. By keeping the data within the device and processing it locally, organizations can avoid the complexities and risks associated with storing and processing data in external environments.

Deploying ML Models in Production

Deploying machine learning models in production involves several critical steps. By following these steps, you can ensure your ML model operates efficiently and delivers consistent value in a production setting.

Step 1: Model Preparation

Before deployment, you need to prepare your model. This includes finalizing the model architecture, training it on the latest dataset, and validating its performance.

Ensure the model meets the required accuracy and performance benchmarks. Document all the steps, hyperparameters, and configurations used during the training phase for reproducibility.

Step 2: Deployment Environment

Choose a suitable deployment environment based on your application needs. This could be a cloud service, on-premises server, or edge device. The environment should support the necessary libraries and frameworks your model requires. It should also provide scalability options to handle varying loads.

Step 3: Containerization

Containerization is a key step in modern ML deployment. Using tools like Docker, you can package the model along with its dependencies into a container. This ensures consistency across different environments and simplifies the deployment process. Create a Dockerfile specifying the base image, required libraries, and the model files.

Step 4: Deploy the Containerized Model

Once the model is containerized, deploy it to the chosen environment. Use container orchestration tools like Kubernetes for managing deployments at scale. Ensure that the deployment setup includes load balancing, so the model can handle multiple requests efficiently. Test the deployed model thoroughly to ensure it performs as expected.

Step 5: Monitor and Scale

After deployment, continuously monitor the model’s performance. Set up logging and monitoring tools to track key metrics such as latency, throughput, and error rates. Use these insights to identify and resolve any issues. Implement auto-scaling policies to adjust the resources based on demand, ensuring the model can handle high loads without performance degradation.

Step 6: Continuous Integration and Deployment (CI/CD)

Implement a CI/CD pipeline to automate the deployment process. This pipeline should include steps for automated testing, building, and deployment of the model.

Tools like Jenkins, GitLab CI, or Azure DevOps can help streamline these processes. CI/CD ensures that any updates or improvements to the model can be deployed quickly and reliably.

Step 7: Post-Deployment Maintenance

Maintaining the model post-deployment is important for its long-term success. Regularly update the model with new data to keep it relevant and accurate. Monitor for model drift, where the model’s performance degrades over time due to changes in the input data.

Schedule periodic retraining and redeployment of the model to maintain its effectiveness. Ensure robust logging and alerting mechanisms are in place to quickly address any issues that arise.

Challenges in Deploying ML Models

Deploying ML models to production environments involves several challenges that can impact their performance, scalability, and reliability. Understanding these challenges is important for developing strategies to overcome them and ensuring successful deployment.

Technical Challenges

- Integration with Existing Systems: Integrating ML models with existing IT infrastructure and business applications can be complex. Creating seamless communication between the model and other systems requires APIs and middleware.

- Scalability: ML models need to handle varying loads efficiently. Scaling the model to serve a large number of requests without compromising performance can be difficult.

Operational Challenges

- Monitoring and Maintenance: Post-deployment, models require continuous monitoring to ensure they perform well in a production environment. This includes tracking key performance metrics, detecting anomalies, and addressing any issues promptly.

- Data Privacy and Security: Ensuring that the data used by the model complies with privacy regulations and is secure from breaches is critical. This involves implementing strong data governance policies and secure data handling practices.

Data Challenges

- Data Quality and Consistency: The model’s performance heavily relies on the quality and consistency of the data it processes. Inconsistent or poor-quality data can lead to inaccurate predictions and degraded model performance.

- Handling Unstructured Data: Many business applications require processing unstructured data such as text, images, and videos. Developing models that can handle and extract insights from unstructured data presents additional complexities.

- Data Silos: Data stored in isolated silos across different departments or systems can hinder the model’s ability to access and utilize all relevant information. Breaking down these silos and integrating data sources is necessary for comprehensive model performance.

Model-Specific Challenges

- Model Drift: Over time, the statistical properties of the input data can change, causing the model’s performance to degrade. Detecting and addressing model drift through regular retraining and updates is essential.

- Interpretability and Explainability: Complex models, especially deep learning models, can be challenging to interpret. Ensuring that the model’s decisions can be explained and understood by stakeholders is important for trust and regulatory compliance.

- Latency Requirements: For real-time applications, the model must provide predictions with minimal latency. Achieving low latency while maintaining accuracy and reliability requires optimization of the model and the deployment environment.

Preparing Your Organization for ML Deployment

Deploying ML models successfully requires not only technical readiness but also organizational preparedness. Here are key steps to ensure your organization is well-prepared for ML deployment:

Building a Data-Driven Culture

A data-driven culture is essential. Promote data literacy among employees to ensure they understand the importance of data and how it can drive decision-making. Encourage collaboration between data scientists, engineers, and stakeholders so there is alignment and strong communication.

Investing in the Right Tools and Technologies

Equip your organization with the right tools and technologies to support ML deployment. Adopt ML platforms and frameworks that facilitate model development, training, and deployment. Utilize automation tools for deployment and monitoring to streamline processes and reduce manual intervention.

Ensuring Data Availability and Quality

High-quality data is key. Ensure that you have access to comprehensive and clean datasets. Implement data governance practices to maintain data integrity, consistency, and security. Address data silos by integrating data from different sources to provide a holistic view for model training and deployment.

Developing Robust Infrastructure

A robust infrastructure is key for deploying ML models. Invest in scalable and reliable computing resources, whether on-premises or in the cloud, to handle the computational demands of ML models. Ensure your infrastructure can support real-time data processing and high availability.

Implementing Continuous Learning and Adaptation

The field of ML is rapidly evolving, and staying updated with the latest advancements is critical. Encourage continuous learning and adaptation within your organization. Provide ongoing training and development opportunities for your teams to enhance their skills and knowledge.

Establishing Clear Processes and Guidelines

Establish clear processes and guidelines for ML model deployment. Define roles and responsibilities for different teams involved in the deployment process. Develop standardized workflows for model development, testing, deployment, and monitoring. Implement best practices for version control, documentation, and reproducibility.

Focusing on Ethical and Responsible AI

Ethical considerations are paramount when deploying ML models. Ensure your models are designed and deployed with fairness, transparency, and accountability in mind. Develop policies to address potential biases and ensure that your models comply with regulatory requirements and ethical standards.

Encouraging Cross-Functional Collaboration

Successful ML deployment requires collaboration across different functions within the organization. Foster communication and collaboration between data scientists, engineers, business analysts, and domain experts. This ensures that the deployed models align with business objectives and deliver actionable insights.

The Full Potential of ML

Deploying machine learning models is a complex but essential step in leveraging the power of AI. By following these guidelines and strategies, you can overcome the challenges associated with ML deployment and unlock the full potential of your models. This will enhance operational efficiency and provide your organization with a competitive edge in an increasingly data-driven world.