Natural Language Processing (NLP) is an interdisciplinary field blending computer science, artificial intelligence, and linguistics, aimed at enabling computers to understand, interpret, and engage with human language in both written and spoken forms. NLP combines computational linguistics with advanced machine learning and deep learning techniques, encompassing subfields like Natural Language Understanding (NLU) and Generation (NLG).

NLP has evolved significantly over decades, finding applications in diverse sectors from business intelligence to medical research, and is prevalent in everyday technology such as digital assistants and speech-to-text software. This technology not only processes and analyzes vast amounts of language data but also extracts insights and responds in real-time, bridging the communication gap between humans and machines.

Core Components and Technologies of NLP

NLP involves a range of technologies and methodologies to enable machines to understand and interact with human language. The core components of NLP encompass various aspects of linguistics and computational techniques, each contributing uniquely to the comprehension and generation of language. Here are some of the pivotal components and technologies in NLP:

Syntax and Semantics Analysis

Syntax analysis involves the examination of how words are organized into sentences, ensuring grammatical correctness. Syntax analysis, or parsing, involves the use of algorithms to identify and understand the structure of language, such as the role of nouns, verbs, adjectives, etc., in a sentence.

Semantics analysis focuses on the meaning behind the words and sentences. It seeks to understand the interpretations of phrases and texts, going beyond just the dictionary meanings of words to comprehend context, intent, and conceptual significance.

Speech Recognition

Speech recognition technology converts spoken language into text. It is a critical aspect of NLP, enabling machines to process and understand human speech. This technology involves the analysis of audio signals, detecting phonemes (individual units of sound), and interpreting them as words and sentences. Advanced speech recognition systems can understand varied accents, speech patterns, and can filter out background noise.

Natural Language Generation (NLG) and Machine Translation

Natural Language Generation (NLG) is the process of producing meaningful phrases and sentences in the form of natural language from some internal representation. It involves constructing a coherent narrative or response from structured data, and it’s widely used in applications like report generation, chatbots, and virtual assistants.

Machine translation is a significant application of NLP that involves automatically translating text or speech from one language to another. It encompasses the understanding of the source language and generating an equivalent text in the target language, maintaining the original meaning, tone, and context.

Machine Learning in NLP

Machine learning plays a pivotal role in NLP by enabling systems to automatically learn and improve from experience. ML algorithms are used for various NLP tasks like text classification, sentiment analysis, topic modeling, and more. With the advent of deep learning, more complex models like neural networks are used for advanced tasks, including sequence-to-sequence models for translation, and transformers for context-aware language understanding.

Contextual Understanding and Disambiguation

NLP systems must be capable of understanding context and disambiguating words and phrases that have multiple meanings. This requires sophisticated algorithms that can take into account the broader context of the conversation or text, rather than just analyzing sentences in isolation.

Entity Recognition and Relationship Extraction

This involves identifying and classifying key elements in text into predefined categories, such as names of persons, organizations, locations, expressions of times, quantities, monetary values, and more. Relationship extraction aims to discern and understand the relationships and interactions between these identified entities.

Sentiment Analysis

Sentiment analysis, or opinion mining, is a crucial application of NLP. It involves analyzing text data to understand the sentiment behind it, such as whether the sentiment is positive, negative, or neutral. This is particularly useful in areas like market analysis, social media monitoring, and customer feedback.

Dialogue Systems and Chatbots

These are interactive systems capable of conversing with humans. They use NLP to understand user inputs and provide relevant, context-aware responses. This technology is fundamental in customer service chatbots, virtual assistants, and interactive voice response (IVR) systems.

Keep it interoperable

By integrating these core components and technologies, NLP has become an indispensable tool in various applications, transforming the way we interact with machines and how they assist us in processing and understanding the vast landscape of human language.

NLP Applications in Business and Research

Natural Language Processing (NLP) has become a cornerstone in both the business world and the realm of academic research, offering a myriad of applications that harness the power of language data.

These applications are transforming how businesses interact with customers, gain insights, and streamline operations. Similarly, in research, NLP is unlocking new frontiers in data analysis and information processing. Below are key applications, including the specified sub-topics and additional areas where NLP is making significant impacts:

Chatbots and Virtual Assistants

In the business context, chatbots and virtual assistants have revolutionized customer service, providing instant, 24/7 assistance to customers. Using NLP, these systems can understand and respond to customer inquiries, perform tasks such as booking appointments or processing orders, and offer personalized recommendations.

In research, virtual assistants are being used to automate data collection, facilitate user interaction with research databases, and even assist in complex data analysis.

Sentiment Analysis and Customer Feedback

Businesses use sentiment analysis to gauge public opinion and customer satisfaction by analyzing data from social media, reviews, and customer feedback. This NLP application helps in understanding customer preferences, improving products and services, and tailoring marketing strategies.

In academic and market research, sentiment analysis is used to study social trends, public opinions on various issues, and consumer behavior, providing valuable insights for sociological and economic studies.

Text Classification and Clustering

Text classification in business involves categorizing documents such as emails, support tickets, and social media posts for efficient information management and response. Clustering, on the other hand, groups similar texts together, aiding in data organization and trend analysis.

Research applications of text classification and clustering include organizing large sets of academic articles, data categorization in qualitative research, and thematic analysis in social sciences.

Information Retrieval and Extraction

Businesses leverage NLP for information retrieval to find relevant documents and data from large databases, enhancing decision-making and business intelligence. Information extraction involves pulling specific, structured information from unstructured data sources, crucial in areas like market analysis and competitive intelligence.

In research, NLP facilitates the extraction of pertinent information from vast academic databases, assists in literature reviews, and enables the extraction of data points from published materials for meta-analyses and systematic reviews.

Automated Report Generation and Summarization

NLP enables automated generation of business reports, executive summaries, and briefing documents by collating and summarizing relevant information, saving time and resources.

Research fields benefit from automated summarization of lengthy academic papers and reports, making it easier to disseminate and communicate complex findings.

Language Translation Services

For businesses operating globally, NLP-powered translation services are indispensable for breaking language barriers, facilitating international trade, and localizing content for different regions.

In research, translation services allow for cross-cultural studies and access to research published in various languages, broadening the scope and collaboration in academia.

Predictive Text and Auto-Completion

In business communication tools, predictive text and auto-completion features streamline the writing process, increase efficiency, and reduce errors.

These features are also beneficial in research, especially in data entry and coding processes, by reducing the time and effort required for documentation.

NLP, with its diverse applications, is not just a technological tool but a strategic asset in the business world and a catalyst for innovation in research. It’s enabling smarter business operations, deeper customer insights, and more efficient research methodologies, marking its significance in the modern digital landscape.

How does Natural Language Processing Integrate With Other Technologies?

Natural Language Processing (NLP) fits into the broader landscape of technology in a highly interoperable and synergistic manner. Its integration with other technology types both enhances NLP’s capabilities and also extends the functionality and efficiency of the technologies it supports.

Let’s take a look at how NLP interoperates within the technological ecosystem:

NLP, Artificial Intelligence (AI), and Machine Learning (ML)

NLP is a subset of AI and relies heavily on ML algorithms. It enhances AI systems with the ability to understand, interpret, and respond to human language. In turn, advancements in AI and ML, such as deep learning, improve NLP’s effectiveness in processing complex language patterns.

NLP, Data Analytics, and Big Data

NLP is essential in analyzing large volumes of unstructured text data. It works alongside big data technologies to extract meaningful insights from text, such as social media feeds, customer reviews, and documents, aiding in sentiment analysis, trend prediction, and decision-making processes.

NLP and Internet of Things (IoT)

In IoT ecosystems, NLP enables more natural interactions between users and smart devices. Voice-activated assistants in smart homes, for example, use NLP to interpret commands and provide responses, making the technology more user-friendly and accessible.

Robotics

In robotics, NLP is used to enhance human-robot interaction. Robots equipped with NLP can understand spoken or written instructions and provide more intuitive responses, making them more adaptable to human environments.

Cloud Computing

Cloud platforms often host NLP services, offering scalable and accessible language processing capabilities to businesses and developers. This integration allows for powerful, on-demand NLP functionalities without the need for extensive local computational resources.

Mobile and Web Applications

NLP is integral to many mobile and web applications, enabling features like chatbots, language translation, and voice-based search. This makes applications more interactive and user-friendly.

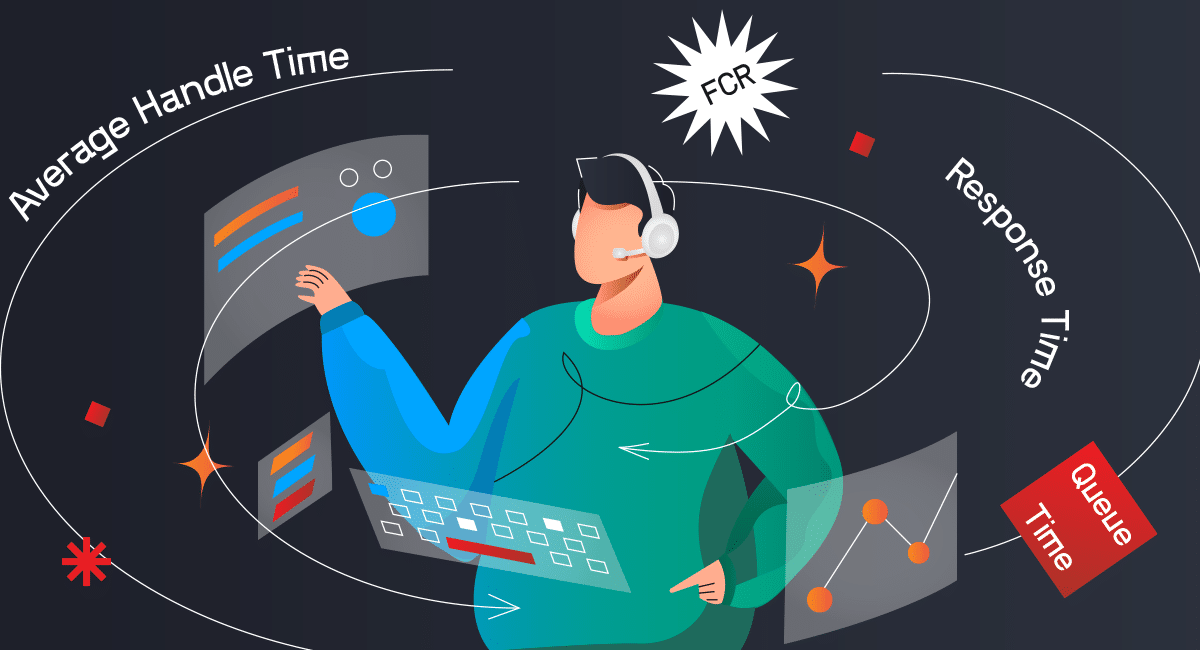

Customer Relationship Management (CRM) Systems

NLP enhances CRM systems by analyzing customer communication and feedback. It helps in sentiment analysis, customer support automation, and personalization of customer interactions.

Healthcare Technology

In healthcare, NLP works with electronic health records (EHR) systems to extract and interpret patient information. This assists in diagnosis, treatment planning, and research, contributing to more efficient and personalized patient care.

Financial Technology (FinTech)

NLP aids in fraud detection, risk assessment, and customer service in the financial sector. It can analyze financial documents, customer inquiries, and market trends to provide insights and automate services.

Educational Technology

NLP is used in educational software for language learning, essay grading, and personalized learning experiences. It analyzes student responses and adapts content to suit individual learning styles and needs.

Speech Recognition and Synthesis

While closely related, speech recognition and synthesis technologies are distinct from NLP but work in tandem with it. NLP enhances the understanding of transcribed speech and enables the generation of natural-sounding synthesized speech.

Virtual and Augmented Reality (VR/AR)

In VR/AR environments, NLP can be used to process voice commands or provide narrative content, enhancing user interaction and immersion within virtual worlds.

Cybersecurity

NLP aids in threat detection by analyzing communication patterns and identifying potential security breaches or malicious activities within textual data.

Legal Tech

NLP assists in legal document analysis, case research, and contract review by quickly processing and extracting relevant information from large volumes of legal texts.

E-Commerce and Retail

NLP enhances customer experience in e-commerce through personalized recommendations, search query understanding, and customer service automation.

NLP’s interoperability with various technology types significantly enhances its utility and applicability across different sectors. By integrating with these technologies, NLP not only adds value to existing systems but also opens up new possibilities for innovation and efficiency in the digital world.

How does NLP integrate With Other Types of AI Learning Models?

Natural Language Processing (NLP) integrates with various types of Artificial Intelligence (AI) learning models to enhance its capabilities in understanding and processing human language. Here are examples illustrating how NLP synergizes with different AI learning models.

Some Background: Rule-Based and Statistical Machine Learning Models

Early NLP systems were predominantly rule-based, relying on sets of handcrafted linguistic rules. These models were designed by language experts and involved explicit instructions for the computer to follow in processing language, such as grammar rules for parsing or matching patterns for conversation bots. While accurate within their defined rules, they lacked flexibility and struggled with the nuances and variability of natural language.

With the advent of statistical methods, NLP shifted towards models that learn from data. These models use statistical techniques to infer language structures and relationships from large datasets. Unlike rule-based systems, they can generalize from examples, making them more adaptable and capable of handling the complexity and subtlety of human language.

Supervised Learning

Supervised Learning is a type of machine learning where the model is trained on labeled data, learning to predict outputs from inputs.

- Text Classification: NLP uses supervised learning to categorize text into predefined classes, like spam detection in emails or sentiment analysis in social media posts.

- Named Entity Recognition (NER): This involves training models to identify and classify key information in text, such as names of people, locations, or dates. For example implemented in customer support systems to identify and categorize key information like product names or issues from customer queries.

Unsupervised Learning

Unsupervised Learning is machine learning that involves training models on data without predefined labels, allowing the model to identify patterns and structures on its own.

- Topic Modeling: NLP leverages unsupervised learning to discover abstract topics within large sets of text documents, such as finding common themes in customer reviews. Topic modeling can be applied in content management systems to automatically categorize and tag articles or blogs based on their content.

- Word Embeddings: Techniques like Word2Vec or GloVe use unsupervised learning to convert words into vector space, capturing semantic similarity. Word embeddings can be Utilized in search engines to enhance the relevance of search results by understanding semantic similarities between words.

Semi-Supervised Learning:

Semi-Supervised Learning is a learning approach that combines a small amount of labeled data with a large amount of unlabeled data during training.

Language Model Fine-tuning: Semi-supervised learning is used to fine-tune pre-trained language models like BERT or GPT on specific datasets, improving performance on tasks like question answering or text summarization. It may be used in personalized chatbots, where a pre-trained model is fine-tuned with specific user data to provide more personalized and context-aware responses.

Deep Learning

Deep Learning is a subset of machine learning involving neural networks with multiple layers that can learn increasingly abstract representations of the data.

The integration of deep learning into NLP has been transformative. Deep learning models, consisting of multi-layered neural networks, excel in capturing intricate patterns in data, making them exceptionally good at understanding language. These models automatically extract features from raw data (like text), a significant advancement over traditional methods that required manual feature extraction.

- Sequence-to-Sequence Models: Used in machine translation and speech recognition, where one sequence (like a sentence in English) is transformed into another (like a sentence in French). Employed in automated translation services, they may translate text or speech from one language to another while preserving the original context.

- Transformers: Models like BERT and GPT use transformer architectures, excelling in understanding context and nuances in language. Transformers may be used in advanced content recommendation systems to suggest relevant articles or media to users based on contextual understanding of their preferences.

Convolutional Neural Networks (CNNs)

Convolutional Neural Networks (CNNs) are a deep learning algorithm known for its ability to detect patterns in images, but also applicable to sequential data like text.

Text Classification: Although primarily known for image processing, CNNs can also be applied to NLP for tasks like classifying text based on its content. CNNs may be applied in sentiment analysis tools for social media monitoring, classifying posts as positive, negative, or neutral.

Recurrent Neural Networks (RNNs) and LSTMs

RNNs and LSTMs are neural networks particularly suited for sequential data, where the output from the previous step is fed as input to the current step. They process inputs sequentially, maintaining an internal state that captures information about previous elements in the sequence. This makes them ideal for tasks where context is important, such as language modeling.

LSTMs are an advanced type of RNNs designed to solve the problem of long-term dependencies. They are capable of learning which information to store and which to discard over long sequences, making them effective for complex NLP tasks that require understanding over longer texts.

- Sentiment Analysis: These networks are effective in understanding the sequence and context in text, making them suitable for analyzing sentiments over time in a piece of writing. For example, they can be used in market trend analysis, to track consumer sentiment over time in relation to product launches or brand reputation.

- Speech Recognition: RNNs and LSTMs are used to process the temporal dynamics of speech for converting spoken language into text. They may be integrated into voice-controlled home automation systems, converting spoken commands into actions.

Transformers

A relatively recent development in NLP, transformers have quickly become the go-to model for a variety of tasks.

Unlike RNNs and LSTMs, transformers process entire sequences of data simultaneously, making them more efficient for parallel processing. Their key innovation is the attention mechanism, which allows the model to focus on different parts of the input sequence, providing a more nuanced understanding of context and relationships in the text.

Models like BERT (Bidirectional Encoder Representations from Transformers) and GPT (Generative Pre-trained Transformer) are based on this architecture and have set new standards in NLP performance.

Transfer Learning

Transfer Learning is a technique where a model developed for one task is reused as the starting point for a model on a second task.

Pre-trained Models: Using models pre-trained on large datasets (like OpenAI’s GPT) and adapting them to specific NLP tasks, such as text generation or answering domain-specific questions. They may be utilized in automated customer service tools, where a model trained on a large dataset is adapted to understand industry-specific queries and jargon.

Generative Adversarial Networks (GANs)

Generative Adversarial Networks (GANs) are an approach in AI where two models, typically a generator and a discriminator, are trained simultaneously in a competitive manner.

Data Augmentation: In NLP, GANs can be used to generate synthetic text data, which helps in augmenting training datasets, particularly useful when data is scarce or sensitive. They can be used in creating synthetic training datasets for NLP models in industries where data privacy is crucial, like healthcare.

Hybrid Models

Hybrid Models combine different types of neural networks or machine learning models to leverage the strengths of each.

Sentiment Analysis and Entity Recognition: Combining different types of neural networks, like CNNs and RNNs, to capture both the local features of text (word groupings) and the long-range dependencies (context) in tasks like sentiment analysis.

Implemented in financial news analysis tools, where the system extracts company names and analyzes the sentiment of news articles for market insights.

Graph Neural Networks (GNNs)

Graph Neural Networks (GNNs) are a type of neural network used to process data that is structured as graphs, capturing relationships between entities.

Attention Mechanisms

Attention Mechanisms are components of neural networks that weigh the importance of different input parts, improving the focus of the model on relevant elements.

Contextual Understanding: Attention mechanisms, particularly in transformer models, help the model focus on relevant parts of the input text, enhancing the understanding of context and improving tasks like text summarization. They may be employed in advanced document summarization tools, where the system focuses on key parts of texts to generate concise summaries.

By integrating these diverse AI learning models, NLP can effectively tackle a wide array of language processing tasks, continually pushing the boundaries of how machines understand and interact with human language.

NLP Implementation Best Practices for IT Leaders and Data Scientists

As Natural Language Processing (NLP) continues to evolve and become more integral to business and technology strategies, IT leaders and data scientists face the challenge of effectively integrating and leveraging NLP in their organizations. Here are some best practices for IT leaders and data scientists to navigate this landscape successfully:

Building an NLP-centric Team and Skill Set

Specialized Hiring: Focus on building a team with specialized skills in NLP. This includes recruiting data scientists with expertise in machine learning, linguistics, and text analysis, as well as engineers experienced in implementing NLP technologies.

Continuous Training and Development: Encourage ongoing learning and professional development for team members. The field of NLP is rapidly advancing, and staying current with the latest tools, languages, and methodologies is crucial.

Cross-Disciplinary Collaboration: Foster a collaborative environment where team members with different expertise (e.g., data engineering, machine learning, linguistics) can work together effectively. Cross-disciplinary collaboration often leads to more innovative solutions.

Investing in Quality Datasets and Robust Infrastructures

Prioritizing High-Quality Data: Invest in acquiring and curating high-quality datasets. The performance of NLP models heavily depends on the quality of the training data.

Diverse and Representative Data: Ensure that the datasets are diverse and representative to avoid biases in the NLP models. This includes considering different languages, dialects, and demographic representations.

Scalable and Secure Infrastructure: Build a scalable and secure infrastructure that can support large-scale NLP tasks. This might involve cloud computing solutions, high-performance computing resources, and secure data storage and processing capabilities.

Staying Informed about Regulatory and Compliance Issues

Understanding Data Privacy Laws: Stay informed about data privacy laws and regulations, such as GDPR in Europe or CCPA in California. Ensure that NLP applications comply with these regulations, especially when processing sensitive or personal data.

Ethical Considerations: Be proactive about the ethical implications of NLP applications. This includes being transparent about how NLP models are used, particularly in customer-facing applications, and implementing fairness and bias checks.

Data Governance Policies: Establish robust data governance policies. This includes data collection, storage, usage, and sharing policies that align with regulatory and compliance requirements.

Innovation and Experimentation

Encouraging R&D Initiatives: Allocate resources for research and development initiatives in NLP. Encourage experimentation and innovation, which can lead to breakthroughs and competitive advantages.

Pilot Projects and Proof of Concepts: Before a full-scale rollout, implement pilot projects or proof of concepts to evaluate the feasibility and effectiveness of NLP solutions in a controlled environment.

Collaboration with External Experts and Vendors

Leveraging External Expertise: Collaborate with external experts, universities, and research institutions to stay at the forefront of NLP research and development.

Partnering with Reliable Vendors: Choose and work closely with reliable vendors for NLP tools and solutions. Evaluate their expertise, support capabilities, and alignment with your business needs.

By adhering to these best practices, IT leaders and data scientists can effectively harness the power of NLP, drive innovation, and ensure that their organizations remain at the cutting edge of this transformative technology.

9 Takeaways for IT Leaders about NLP

- NLP stands at the intersection of computer science, artificial intelligence, and linguistics, providing machines the ability to understand and interact with human language.

- The evolution of NLP, from rule-based to advanced machine learning and deep learning models, demonstrates its growing sophistication and integration across various sectors.

- NLP’s interoperability with other technologies, such as AI, ML, IoT, and cloud computing, has significantly expanded its utility and applicability, making it a versatile tool in the digital landscape.

- NLP holds transformative potential for businesses, evident in its diverse applications ranging from customer service automation to insightful data analysis and decision-making.

- The future trends in NLP, including advances in unsupervised learning, the significance of transfer learning, and integration with multimodal data, point towards even more innovative and efficient applications.

- Ethical considerations, bias mitigation, and real-time processing capabilities will shape the responsible and effective use of NLP in the future.

- IT leaders and data scientists are encouraged to build NLP-centric teams and invest in quality datasets and infrastructures to leverage the full potential of NLP.

- Staying informed about regulatory, compliance issues, and ethical considerations is crucial in the responsible deployment of NLP technologies.

- Engaging with NLP requires a blend of strategic planning, continuous learning, and innovative experimentation, ensuring that NLP solutions align with business objectives and adapt to evolving technological landscapes.

NLP is not just a technological advancement but a strategic asset that can drive significant business transformations. IT leaders and data scientists are encouraged to actively engage with and explore NLP’s capabilities, keeping pace with its rapid developments to harness its full potential for organizational growth and success.