Large Language Models (LLMs) are powerful artificial intelligence tools, capable of generating insightful content, answering questions, and analyzing data—but they’re only as good as the prompts you give them.

Think of an LLM as a highly capable assistant who’s ready to help, but needs clear instructions to deliver the best results (similar to the human brain). The quality of the input you provide has a direct impact on the output you get. Understanding how different types of inputs affect LLM performance is crucial if you want to get the most out of these tools.

This article explores the role of inputs in shaping accurate responses, the types of prompts that work best, and practical scenarios showing how careful input design can improve LLM effectiveness.

The Role of Inputs in Shaping LLM Outputs

Large Language Models, or LLMs, are like skilled chefs in a kitchen: You give them ingredients, and they whip up a dish.

But as any good chef will tell you, the quality of the dish is entirely dependent on the ingredients you provide. Similarly, the input you feed into an LLM—the prompt, context, and clarity—plays a huge role in determining the output you get.

Think of LLM inputs as the instruction manual for the model. If you give the model a well-crafted, specific prompt, you’re setting it up for success. Clear, detailed instructions help guide it to exactly what you need in clear human language. On the other hand, if your prompt is vague or lacks context, you might end up with a response that misses the mark.

Imagine you’re asking for travel advice. If you type in, “What should I do?” the model could go in any direction. It might talk about productivity tips, suggest random activities, or even misunderstand your intent completely.

But if you say, “What are some good spots to visit in Tokyo for a day trip?” suddenly, the response is much more tailored to your needs. This isn’t just about getting more information in the answer—it’s about getting the right information.

Garbage In, Garbage Out

Garbage in, garbage out is a phrase you’ve probably heard before, and it applies here. If your input is sloppy or unclear, the generative AI output will reflect that. You have to guide the model, like giving a tour guide clear directions about where you want to go instead of just saying, “Show me around.”

The power you have over the model’s output really boils down to the inputs you provide. The more thought you put into what you ask, the better the model can meet your needs and provide correct answers. Whether you need an insightful report or just want a creative story, your inputs are the starting point that shapes everything that comes after.

Types of Inputs that Affect LLM Performance

Not all inputs are created equal. When you’re working with a large language model, the way you phrase your request can make or break the response quality. Let’s dig into the different types of inputs and how each one affects what you get back.

Structured Prompts vs. Unstructured Prompts

The first thing to consider is whether your prompt is structured or unstructured. Structured prompts are like a set of clear instructions. You’re telling the model exactly what you need.

Think of it like giving someone a recipe to follow. If you write something like, “List three benefits of using cloud storage for small businesses,” the generative AI model knows you want specific information in a numbered list, and it will deliver exactly that.

Unstructured prompts, on the other hand, are more open-ended. They’re like asking someone, “Tell me about cloud storage.” You’ll still get an answer, but it might be a rambling overview rather than the concise, targeted response you’re after.

Unstructured prompts can be great if you’re exploring ideas or need a broad take, but when precision matters, structure is your friend.

Contextual vs. Non-Contextual Inputs

Context is everything. Input prompts that include relevant context make it easier for the model to understand exactly what you’re asking. For instance, if you’re asking about “Paris,” you could mean the city in France, the character from Greek mythology, or even the pop culture celebrity.

But when you provide a prompt like, “Tell me about the best neighborhoods to visit in Paris, France,” you’re removing the guesswork. You’re telling the model exactly what kind of information you need, which means the answer will be more relevant and accurate.

When your input is non-contextual, the model is left to guess your intent. And while LLMs are good at picking up on hints, they’re not mind readers. Providing additional context—like explaining the subject, specifying a timeframe, or pointing to a particular focus—makes all the difference between a good answer and a great one.

Length and Complexity of Inputs

There’s also a balance between input length and complexity. Short prompts can work, but they often leave too much to interpretation.

A prompt like “Explain machine learning” is going to result in a very general answer, which may or may not meet your expectations. But when you give a detailed input—“Explain machine learning in simple terms, focusing on real-world examples related to healthcare”—you’re adding more ingredients to the mix, which helps the model craft a richer and more focused response.

However, complexity should be used wisely. Too many details or multiple questions in one prompt can confuse the model, leading to a response that’s muddled or off-topic. Keeping inputs clear, concise, and focused on one task at a time is the best way to ensure you get what you’re looking for.

Bias and Ambiguity in Inputs

Even with the best prompts, it’s easy to introduce bias or ambiguity without realizing it. The way you phrase a question can nudge an LLM in a particular direction, sometimes resulting in a response that’s not entirely balanced or even misleading.

Recognizing Bias in Prompts

Bias in prompts is often subtle, but it can significantly affect the quality and neutrality of the response you receive. If you start a prompt with a preconceived notion, the model will likely reflect that back to you by providing harmful content to some degree.

For example, if you ask, “Why is remote work less productive than office work?” you’re already assuming remote work is inferior. The model will pick up on this and provide an answer that supports the premise, even if it isn’t necessarily true.

Instead, try framing your input in a neutral way, like, “How does productivity compare between remote work and office work?” This approach allows the model to provide a balanced response without leaning towards an assumption you made in your phrasing. The key is to be conscious of the language you use and how it might lead the model to a particular conclusion.

Managing Ambiguity

Ambiguity is another challenge that often sneaks into prompts without you noticing. If your input is vague, the model can interpret it in a number of ways, which can lead to responses that are too general, irrelevant, or not at all what you wanted.

Consider a prompt like, “Tell me about technology.” That’s such a broad request that it’s hard for the model to know where to begin. Are you asking about the history of technology, current trends, or its impact on society?

To get better results, try to remove ambiguity by specifying exactly what you need. Instead of “Tell me about technology,” you could say, “Explain the impact of AI on workplace efficiency.” This way, the model has clear guidance on the topic, making it more likely to produce a response that’s on point and useful.

Examples of Bias and Ambiguity in Inputs

Let’s look at a few examples to highlight how bias and ambiguity can shape high-quality outputs:

Biased Input: “Why is renewable energy always unreliable?”

Likely Response: The model may provide arguments supporting the unreliability of renewable energy, focusing only on negative aspects.

Neutral Input: “What are the challenges and benefits of renewable energy?”

Likely Response: The model gives a balanced overview, discussing both pros and cons, which is far more informative.

Ambiguous Input: “What’s the future of transportation?”

Likely Response: The answer could go anywhere—from electric cars to hyperloop systems—depending on how the model interprets “future.”

Specific Input: “What advancements in electric vehicles can we expect over the next decade?”

Likely Response: A focused answer about electric vehicles, with relevant trends and technological developments.

Being aware of bias and ambiguity when crafting inputs can dramatically improve the quality of the outputs you receive. Always aim for neutral language to avoid unintentionally leading the model, and clarify your requests to ensure the model understands your intent.

The clearer you are, the better the model can perform, and that means getting the answers you need without unnecessary guesswork or slant.

Techniques for Improving Input Quality

Getting the best results from an LLM isn’t just about the technology behind it—it’s about how you communicate with it. Crafting effective prompts is an art, and there are several techniques you can use to improve the quality of your inputs. Let’s explore some simple but powerful ways to optimize how you ask questions to get better responses.

Prompt Engineering

Prompt engineering is all about designing inputs that maximize the quality of the output. It’s a fancy artificial intelligence term for a straightforward idea: If you take time to thoughtfully craft what you’re asking, you’ll get more useful and relevant responses.

Here are a few techniques for better prompt engineering.

- Be Specific: Instead of asking something broad like, “Tell me about AI,” you could say, “Explain how AI can be used to improve customer service in retail.” The more specific you are, the more tailored the response will be.

- Use Direct Requests: If you need a list, say so. For example, “List three key challenges of data integration” is more effective than “What are the challenges of data integration?” This makes it easier for the model to provide exactly what you need.

- Add Instructions: You can guide the model by adding instructions directly in the prompt. For instance, “Explain machine learning in simple terms, as if you’re explaining to someone with no technical background.” This helps adjust the complexity and tone of the response to suit your audience.

Iterative Prompt Refinement

Writing a good prompt might not happen on the first try. Sometimes, good prompt engineering means going through a few versions of the prompt to get the response you’re looking for. This process is known as iterative prompt refinement.

Start with an initial prompt, see how the model responds, and then tweak the input to be clearer or add context. For example, if your original question about “workplace technology” resulted in a vague answer, you could refine it to, “How do collaborative tools like Slack and Microsoft Teams improve workplace productivity?”

Then, learn from the outputs. Use the model’s output as feedback. If the response isn’t quite right, figure out why. Was the question too broad? Was the context missing? This iterative cycle is a great way to gradually improve the quality of your inputs.

Using Examples in Prompts

Sometimes, showing is better than telling. If you want the model to follow a specific format or provide certain types of answers, you can include examples in your prompt.

- Give a Template: If you want a response in a specific format, provide a template. For example: “Give me a summary of this article in bullet points, like this: 1. Main Idea, 2. Key Detail, 3. Conclusion.” This helps the model understand exactly how you want the output.

- Illustrate with Scenarios: When you need a particular tone or level of detail, you can add an example. For instance, “Explain this concept like you would if you were talking to a high school student. For example, imagine you’re explaining it in a classroom setting.”

Avoiding Common Pitfalls

It’s tempting to ask multiple questions in one prompt, but this (called overloading the prompt) can confuse the model. Instead of, “What is AI, and how can it be used in healthcare and what are its limitations?” break it down into parts. This way, the model can focus on answering each question clearly and thoroughly.

Vagueness is another common pitfall. A prompt like, “What’s going on with technology?” is too general. Always aim for a clear, direct question. Instead, try, “What are the key trends in AI development for 2024?” The more you narrow it down, the better the model can help you.

Practical Scenarios: How LLM Inputs Impact Use Cases

It’s one thing to understand the theory behind crafting effective inputs, but it’s another to see it in action. Let’s look at some practical scenarios where the quality of human language inputs can dramatically influence what an LLM delivers.

Content Generation

When using an LLM for content creation, the input you provide will directly shape the output in terms of tone, detail, and structure.

Scenario 1: Blog Post Introduction

Generic Prompt: “Write an introduction about remote work.”

Likely Output: You’ll get a basic, general introduction about remote work that could apply to any context—probably not very unique or tailored.

Refined Prompt: “Write an engaging introduction for a blog post discussing the benefits of remote work for small tech startups, focusing on productivity and employee satisfaction.”

Likely Output: Now, the response is focused, relevant to small tech startups, and will dive right into productivity and employee satisfaction, giving you a much more targeted and usable piece.

Scenario 2: Product Descriptions

Generic Prompt: “Describe a smartwatch.”

Likely Output: A broad overview of smartwatch features—useful, but not very specific.

Refined Prompt: “Write a product description for a fitness-focused smartwatch that highlights heart rate monitoring, GPS tracking, and its waterproof design.”

Likely Output: A detailed description that emphasizes the features you want to highlight, making it ideal for marketing purposes.

Data Analysis and Q&A

For data analysis or providing answers to specific questions, clarity is crucial. A vague input can lead to a vague output, while precision brings the best results.

Scenario 1: Business Insights

Generic Prompt: “What is happening in the retail industry?”

Likely Output: You’ll get a broad summary of trends that could cover anything from customer behavior to technology advancements—lots of information, but maybe not exactly what you need.

Refined Prompt: “Provide insights on how e-commerce growth is affecting physical retail stores in the U.S. in 2024.”

Likely Output: A focused response about the impact of e-commerce on physical stores, tailored to a specific year and region, giving you actionable insights.

Scenario 2: Question & Answer

Generic Prompt: “Tell me about renewable energy.”

Likely Output: You’ll get a general explanation that could cover anything from solar panels to wind turbines, without much depth.

Refined Prompt: “Explain how solar power is being adopted by residential homeowners in California, focusing on incentives and cost-saving benefits.”

Likely Output: A response that directly addresses adoption, incentives, and cost savings, which is far more useful if you’re looking for specifics about residential solar use in California.

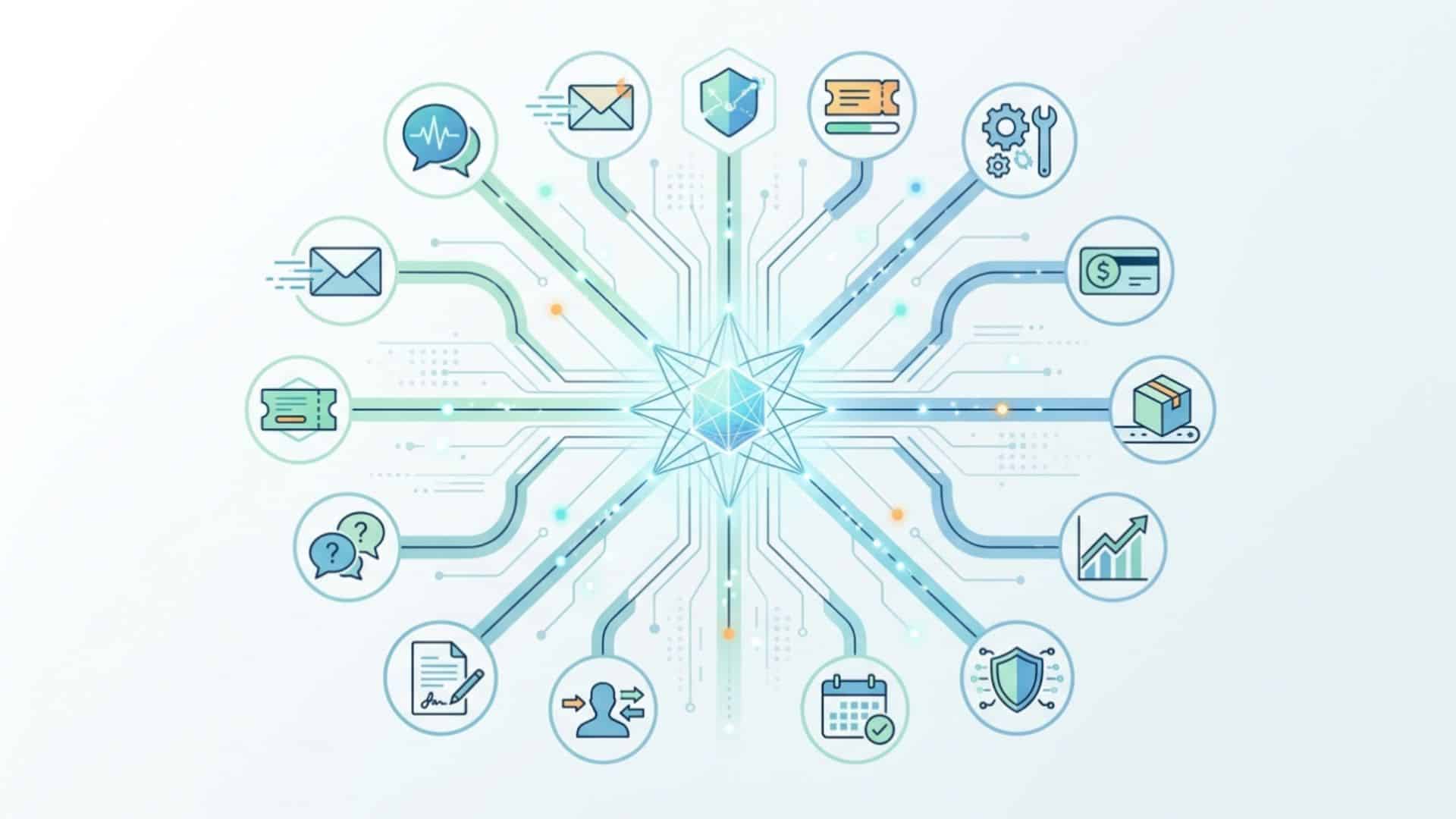

Customer Support Automation

When using LLMs to automate customer interactions, crafting clear and empathetic inputs is key to ensuring the output meets the needs of the customer.

Scenario 1: Customer Question About Pricing

Generic Prompt: “Explain the pricing.”

Likely Output: The model will give a broad response that might not address the specifics the customer needs—like whether it includes hidden fees or different subscription tiers.

Refined Prompt: “Explain our subscription pricing model, including details on the monthly cost, annual discounts, and any additional fees that might apply.”

Likely Output: A thorough explanation that covers all aspects of pricing, making sure the customer has all the information they need to make a decision.

Scenario 2: Troubleshooting Help

Generic Prompt: “Help me with my internet issue.”

Likely Output: The model could respond with very general troubleshooting tips, which may or may not solve the problem.

Refined Prompt: “Provide step-by-step instructions to troubleshoot a slow internet connection for a customer using a Wi-Fi router at home.”

Likely Output: A specific set of steps that are much more likely to help the customer resolve their issue effectively.

Getting the Right Answer

Your interaction with an LLM is a two-way street—the clearer and more thoughtfully crafted your inputs, the better the outputs will be. By focusing on specific, well-crafted prompts, using context, and refining your inputs iteratively, you can unlock the true potential of LLMs.

Whether you’re using them for content creation, data analysis, or customer support, the key is to guide the model in a way that delivers exactly what you need. Mastering the art of input crafting isn’t just about getting an answer—it’s about getting the right answer, every time.