The world is changing because of artificial intelligence (AI), but for many businesses it’s not clear how we get from the way things are now to the hyper-productive utopia pitched by AI solutions. For many executives and VPs tasked with integrating AI into their business, their focus is on the practical challenges of implementation. These challenges appear to be so large they block the view of AI’s potential. How can a business leader be excited about the future if they can’t see how we get there?

These challenges are known to anyone who’s spent a few minutes exploring available AI solutions. Artificial intelligence for businesses (or “enterprise AI”) has three major problems: control, transparency, and trust. If you’ve already explored an AI solution and ended up on this article, you likely hit one of these three roadblocks. In this article we’ll detail each of these three challenges and a potential solution for the future.

The Challenge with AI is Knowledge Infrastructure

Let’s start with reality. Does artificial intelligence have the potential to change businesses and industries? No. Artificial intelligence is changing businesses and industries. This technology’s impact is not hypothetical. There are documented results.

McKinsey’s State of AI report for 2023 showed across multiple industries an average of 20% of professionals have used generative AI tools for work. On average across all organizational functions, roughly 42% of respondents reported cost decreases due to AI adoption and 59% reported revenue increases due to AI adoption. The greatest cost savings were reported in service operations, corporate finance, and risk; while the greatest revenue increases were reported in manufacturing, risk, and product development.

The success of AI implementation is most prevalent in industries that lucked out by having the knowledge infrastructure needed to take advantage of AI technologies.

For example, our work at Shelf has primarily focused on call centers within major organizations. Within these divisions, our clients have reported their agents have reduced their time spent resolving customer questions by 80 percent. Average handle time has gone down by 30 percent. Considering the size of our clients, these dramatic changes in productivity warrant rethinking the department’s structure and expectations — all thanks to enterprise AI.

Knowledge infrastructure is the true challenge for implementing AI into your business. It is the origin of the three previously referenced problems. For some readers “knowledge infrastructure” is a new term, so let’s use it in an actual example.

The most popular AI solutions are large language models (LLMs). These technologies allow users to input requests as if they’re conversing with another person. This other person happens to possess superhuman knowledge and an even more superhuman typing speed. Many LLMs access knowledge through scraping the internet — which is another way of saying the technology can read every page of the internet and remember the information contained on each page. The LLM uses the internet as its central repository of information (or “knowledge base”). Depending on your prompts to the LLM, it will give you different answers based on what it knows from scraping the internet. This means everything the LLM produces relies on the infrastructure of its knowledge.

The internet is the knowledge infrastructure for many available AI solutions, which is why these technologies have challenges. LLMs may have incredible intelligence for filtering its knowledge base for relevant information, but knowledge is like any other infrastructure. It’s rooted and intractable. If your knowledge infrastructure isn’t serving its purpose, then it can’t be resolved by a smarter tool. You need better infrastructure. In this instance, you need to structure your knowledge as an infrastructure for these tools.

If you don’t, then you’ll run into some familiar roadblocks. We’ve bucketed the three major challenges for enterprise AI below:

Challenge with AI 1: Control

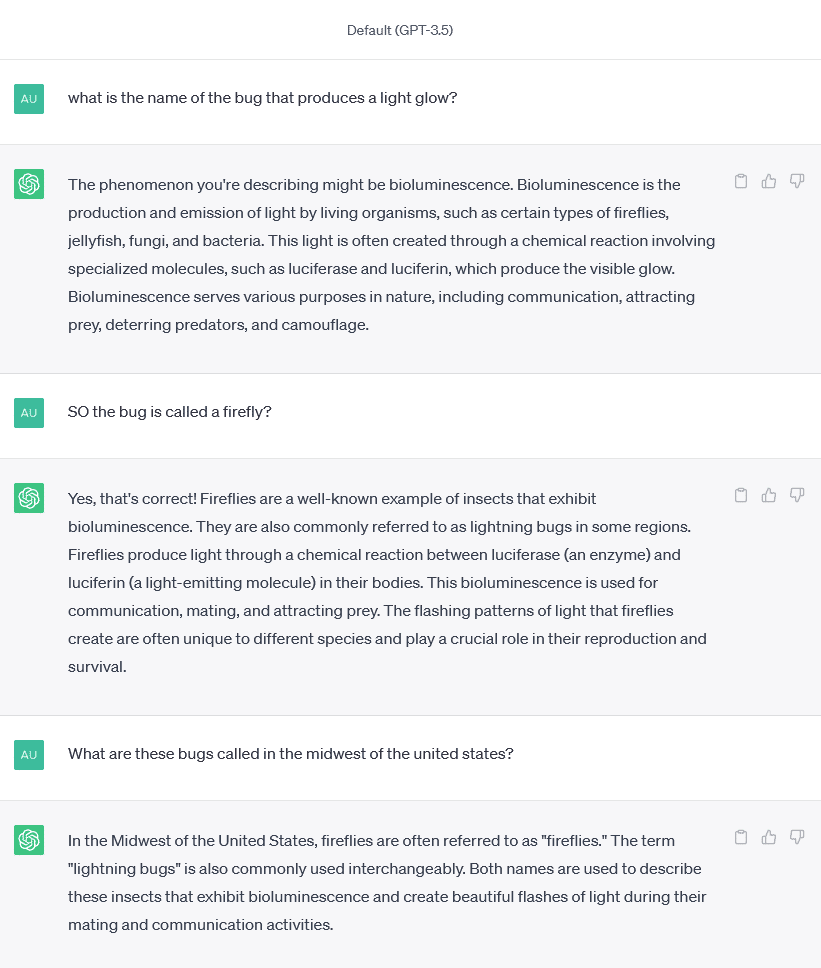

Whatever AI solutions you use, you’ll need the ability to control its knowledge infrastructure. Popular AI tools (such as Chat-GPT or LLaMa) use the internet as their knowledge infrastructure. This provides a lot of information, but may result in prompts receiving answers that are imprecise, or irrelevant.

Let’s start with a simple and innocuous example. If you ask an LLM with unlimited access to the internet “what is the common name for the small bug that produces a bright glow?” the LLM will likely tell you these bugs are called “fireflies.” This answer is technically correct but if your company is based in the midwest where everyone calls these bugs “lightning bugs” then the answer is imprecise. Ideally, you could control the knowledge infrastructure to be tuned to the most relevant information for your region.

This example becomes an operational risk when it is applied to the business equivalent of geographical misunderstanding. Imagine a multinational corporation gives an AI solution complete access to all of its digital content and files. Someone in the marketing department for North America requests “the most recent version of our logo.” If there’s no control over the solution’s access to knowledge, then the “most recent version” of “our” logo could be a document saved five minutes ago by the marketing department in Asia.

No control over your knowledge infrastructure can be an existential threat for some industries such as insurance or healthcare. Providing personal information to an LLM could raise the risk of sensitive information being accessed by unauthorized users (this was humorously depicted in the webcomic XKCD). Your knowledge infrastructure needs to have the functionality of identifying what information can be supplied to which users in order to protect your organization and customers.

Control also means maintaining ownership of your data. You don’t want your proprietary information to be used to answer prompts from other users outside your organization.

One method of control would be to see how answers were provided, which brings us to the next challenge.

Challenge with AI 2: Transparency

Have you ever gotten a wrong answer from an LLM? Have you tried asking how it got to that answer? You may be surprised to find the answer is often “I don’t know.”

Social media has many clever users screenshotting when an LLM has contradicted itself or provided explicitly false information (such as “2+2=5”). More troubling than the false information is the LLM’s inability to explain how it made the mistake in the first place. This lack of transparency is a known problem with all currently available tools.

This phenomenon has created unease with businesses who would feel more comfortable if they could audit how a prompt led to an unsatisfactory response. This is sometimes referred to as a human in the loop (HitL). This term expresses the uncertainty users feel toward this new technology — likely due to its incredible advancement compared to similar technology in recent years. The stopgap solution to this unease is to provide an organization with the ability to see how answers are produced.

You may be familiar with the quote “any sufficiently advanced technology is indistinguishable from magic,” but this quote was never meant to advocate for the unknowable nature of magic tricks. An audience may be entertained by the spectacle of magical technology, but a company executive wants to know how exactly it pulled that rabbit from a hat.

If an AI solution provided control and transparency, it might resolve the final and most important challenge for adopting AI.

Challenge with AI 3: Trust

The prevailing challenge for enterprise AI is contained in one word: hallucination. This is the euphemism for when an LLM provides false, incorrect, or fabricated information. It is the prevailing problem for adopting artificial intelligence for business strategies because it signifies the distrust users have for the technology.

Hallucinations may be the result of lack of control leading to LLMs pulling information that isn’t relevant. Or hallucinations may be caused by a lack of transparency for how answers are provided. Hallucinations might also be resolved if the technology supporting LLMs develops for a few more years. All of those challenges could be resolved with time, but there’s another solution available right now.

Solving AI Challenges Requires Knowledge as an Infrastructure

Every one of these challenges can be resolved by not treating your organization’s information as a data dump, but integrating your knowledge as an infrastructure for AI.

Understanding your knowledge as an infrastructure for AI means taking your organization’s vast information and making it readable to these tools. This means centralizing your knowledge across different applications, folders, and databases. It also means resolving common issues such as duplicates, contradictions, or gaps in knowledge.

If your organization is diligent with its knowledge management, you may be ready to adopt AI already. Schedule a meeting with our knowledge experts to see what your organization can do to resolve these challenges to adopting artificial intelligence as your business strategy.